CPU Surge During Application Startup

Download

Modo Foco

Tamanho da Fonte

Overview

Usually, during the release or restart of an application, there might be a significant increase in CPU utilization, potentially consuming all cluster resources. This is mainly because the Java Virtual Machine (JVM) needs to perform class loading and object initialization during application startup, leading to the CPU handling more compilation tasks throughout the process.

In a Kubernetes scenario, applications are deployed through containers within Pods, and each container can set Requests and Limits to control the minimum and maximum resource usage. If the Limit is set too low, it may result in slow application startup or even failure to start due to insufficient resources. Conversely, if the Limit is set too high, the application's operation may excessively utilize resources, creating resource competition with other applications.

Overview

Operation Steps

Set the startup time of the application and the multiplier for increasing the Limit, thereby increasing the upper limit of resources used by the application during the initial startup phase.

Notes

The capability of resource surge at business startup depends on the kernel of the native nodes. This feature is only supported in native nodes.

Before using this feature, you need to install the QoS Agent in advance. Ensure the QoS Agent component version is 1.1.4 or above.

How the Feature Works

In Kubernetes, resource limits (Limits) are controlled by the CPU cgroup control module’s cpu.cfs_period_us and cpu.cfs_quota_us configurations. Kubernetes configures these settings for the container’s cgroup. By adjusting the cpu.cfs_quota_us value on the node where the Pod is located, you can achieve temporary resource surges, thereby increasing the resource usage limit during the application startup phase.

Operation Steps

Installing Add-ons

1. Log in to the Tencent Kubernetes Engine Console, and choose Cluster from the left navigation bar.

2. In the cluster list, click on the desired cluster ID to access its detailed page.

3. Select Add-on management from the left-side menu, and click on Create within the component management page.

4. On the New Add-on Management page, check QoS Agent.

5. Click on Done to install the add-on.

Resource Surge Capability at Application Startup

1. On the Component Management page, check the QoS Agent component version. Ensure the QoS Agent component version is 1.1.4 or above. If the version is below this requirement, click Upgrade on the right side of the component to upgrade.

2. Create a PodQoS CRD object via kubectl and apply it to the workload that requires a surge. Example code is as follows:

apiVersion: ensurance.crane.io/v1alpha1kind: PodQOSmetadata:name: exceedspec:labelSelector:matchLabels:app: 0kusa3 # Match the tag on the workload that needs a surge.resourceQOS:cpuQOS:limitExceed:time: 5 # Time after which a surge is required (in mins)value: "1.2" # Multiplication for the Limit surge

3. Create the workload. Example code is as follows:

apiVersion: apps/v1kind: Deploymentmetadata:name: nginx-deploymentlabels:app: 0kusa3 # Must be consistent with the matchLabels in the PodQoS object.spec:replicas: 3selector:matchLabels:app: 0kusa3template:metadata:labels:app: 0kusa3spec:containers:- name: nginximage: nginx:1.14.2ports:- containerPort: 80

4. Log in to the node where the Pod is located and check the cfs_quota value corresponding to the container on the node.

Path:

/sys/fs/cgroup/cpu/kubepods/burstble/pod<id>/<containerid>/cpu.cfs_quota_us

Example:

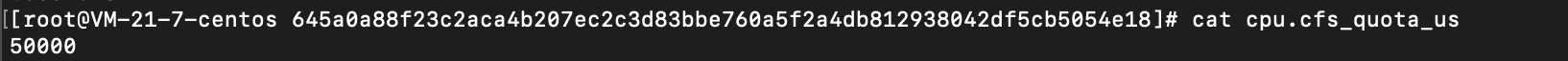

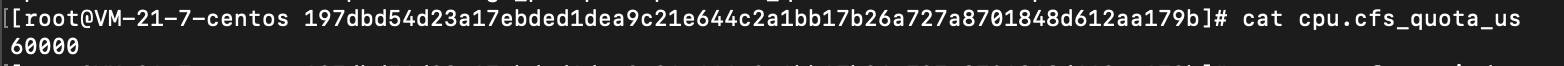

/sys/fs/cgroup/cpu/kubepods/burstable/pod44ad741a-cbe6-48a2-a5b7-b6920df2fe40/645a0a88f23c2aca4b207ec2c3d83bbe760a5f2a4db812938042df5cb5054e18/cpu.cfs_quota_us

Where:

pod<id>:<id> comes from the UID field in the Pod YAML.

<containerid>: comes from the containerID field in the Pod YAML.

Before creating PodQoS, the value of cpu.cfs_quota_us is cpu.cfs_period_us Limit. For example: 100000 0.5 cores = 50000.

After creating PodQoS, within the first 5 minutes after the Pod is recreated, the new cpu.cfs_quota_us value is the old value multiplied by 1.2, meaning the Limit is increased.

Note:

The Pod ID will change after the Pod is recreated.

Ajuda e Suporte

Esta página foi útil?

Você também pode entrar em contato com a Equipe de vendas ou Enviar um tíquete em caso de ajuda.

comentários