製品アップデート情報

製品リリース記録

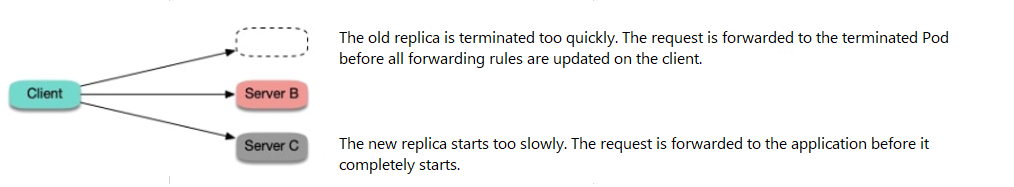

preStop to the container to make the Pod sleep for a while before being truly terminated, during which kube-proxy on the client node will update all the forwarding rules, and then the container will be terminated. In this case, the Pod can still run for a while after being terminated, during which it can still process requests normally if new requests are forwarded to it as forwarding rules are not updated promptly on the client, so as to avoid connection exceptions. This method sounds ungraceful but has a good effect. There is no silver bullet in a distributed architecture, and you can only try to find and implement the best solution under the current design.ReadinessProbe to the container to make the Service Endpoint be updated only after all processes in the container are truly started. Then, kube-proxy on the client node will update the forwarding rules to forward the incoming traffic. This ensures that the traffic will be forwarded only after the Pod is completely ready and thus avoids connection exceptions.

Sample YAML configuration:readinessProbe:httpGet:path: /healthzport: 80httpHeaders:- name: X-Custom-Headervalue: AwesomeinitialDelaySeconds: 10timeoutSeconds: 1lifecycle:preStop:exec:command: ["/bin/bash", "-c", "sleep 10"]

Apakah halaman ini membantu?

Anda juga dapat Menghubungi Penjualan atau Mengirimkan Tiket untuk meminta bantuan.

masukan