릴리스 노트

Data Consumption Guide

포커스 모드

폰트 크기

Overview

After data is synced to Kafka, you can consume the subscribed data through Kafka 0.11 or later available at DOWNLOAD. This document provides client consumption demos for Java, Go, and Python for you to quickly test the process of data consumption and understand the method of data parsing.

Note

The demo only prints out the consumed data and does not contain any usage instructions. You need to write your own data processing logic based on the demo. You can also use Kafka clients in other languages to consume and parse data.

The upper limit of message size in target CKafka must be greater than the maximum value of a single row of data in the source database table so that data can be normally synced to CKafka.

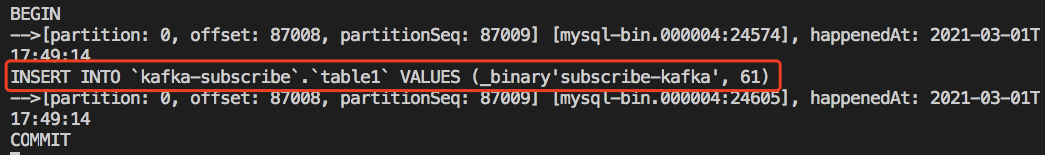

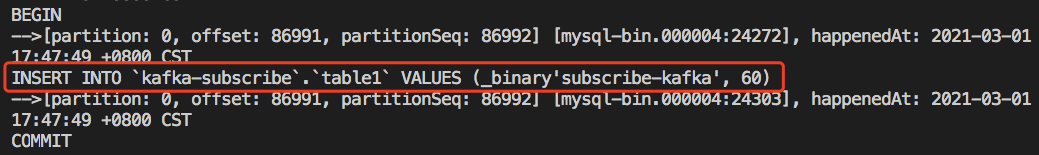

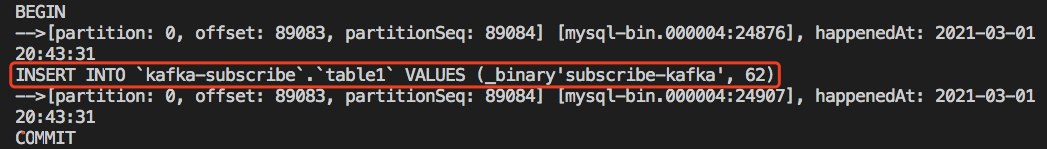

In scenarios where only the specified databases and tables (part of the source instance objects) are synced and single-partition Kafka topics are used, only the data of the synced objects will be written to Kafka topics after DTS parses incremental data. The data of non-sync objects will be converted into empty transactions and then written to Kafka topics. Therefore, there are empty transactions during message consumption. The BEGIN and COMMIT messages in the empty transactions contain the GTID information, which can ensure the GTID continuity and integrity. In the consumption demos for MySQL/TDSQL-C for MySQL, multiple empty transactions have been compressed to reduce the number of messages.

To ensure that data can be rewritten from where the task is paused, DTS adopts the checkpoint mechanism for data sync links where Kafka is the target end. Specifically, when messages are written to Kafka topics, a checkpoint message is inserted every 10 seconds to mark the data sync offset. When the task is resumed after being interrupted, data can be rewritten from the checkpoint message. The consumer commits a consumption offset every time it encounters a checkpoint message so that the consumption offset can be updated timely.

When the selected data format is JSON, if you have used or are familiar with the open-source subscription tool Canal, you can choose to convert the consumed JSON data to a Canal-compatible data format for subsequent processing. The demo already supports this feature, and you can implement it by adding the

trans2canal parameter in the demo startup parameters. Currently, this feature is supported only in Java.Downloading Consumption Demos

When configuring the sync task, you can select Avro or JSON as the data format. Avro adopt the binary format with a higher consumption efficiency, while JSON adopts the easier-to-use lightweight text format. The reference demo varies by the selected data format.

The following demo already contains the Avro/JSON protocol file, so you don't need to download it separately.

For the description of the logic and key parameters in the demo, see Demo Description.

Instructions for the Java Demo

Compiling environment: The package management tool Maven or Gradle, and JDK8. You can choose a desired package management tool. The following takes Maven as an example.

The steps are as follows:

1. Download the Java demo and decompress it.

2. Access the decompressed directory. Maven model and pom.xml files are placed in the directory for your use as needed.

Package with Maven by running

mvn clean package.3. Run the demo.

After packaging the project with Maven, go to the target folder

target and run java -jar consumerDemo-avro-1.0-SNAPSHOT.jar --brokers xxx --topic xxx --group xxx --trans2sql.brokers is the CKafka access address, and topic is the topic name configured for the data sync task. If there are multiple topics, data in these topics need to be consumed separately. To obtain the values of brokers and topic, you can go to the Data Sync page and click View in the Operation column of the sync task list.group is the consumer group name. You can create a consumer in CKafka in advance or enter a custom group name here.trans2sql indicates whether to enable conversion to SQL statement. In Java code, if this parameter is carried, the conversion will be enabled.trans2canal indicates whether to print the data in Canal format. If this parameter is carried, the conversion will be enabled.Note:

If

trans2sql is carried, javax.xml.bind.DatatypeConverter.printHexBinary() will be used to convert byte values to hex values. You should use JDK 1.8 or later to avoid incompatibility. If you don't need SQL conversion, comment this parameter out.4. Observe the consumption.

Instructions for the Go Demo

Compiling environment: Go 1.12 or later, with the Go module environment configured.

The steps are as follows:

1. Download the Go demo and decompress it.

2. Access the decompressed directory and run

go build -o subscribe ./main/main.go to generate the executable file subscribe.3. Run

./subscribe --brokers=xxx --topic=xxx --group=xxx --trans2sql=true.brokers is the CKafka access address, and topic is the topic name configured for the data sync task. If there are multiple topics, data in these topics need to be consumed separately. To obtain the values of brokers and topic, you can go to the Data Sync page and click View in the Operation column of the sync task list.group is the consumer group name. You can create a consumer in CKafka in advance or enter a custom group name here.trans2sql indicates whether to enable conversion to SQL statement.4. Observe the consumption.

Instructions for the Python3 Demo

Compiling runtime environment: Install Python3 and pip3 (for dependency package installation).

Use

pip3 to install the dependency package:pip install flagpip install kafka-pythonpip install avro

The steps are as follows:

1. Download Python3 demo and decompress it.

2. Run

python main.py --brokers=xxx --topic=xxx --group=xxx --trans2sql=1.brokers is the CKafka access address, and topic is the topic name configured for the data sync task. If there are multiple topics, data in these topics need to be consumed separately. To obtain the values of brokers and topic, you can go to the Data Sync page and click View in the Operation column of the sync task list.group is the consumer group name. You can create a consumer in CKafka in advance or enter a custom group name here.trans2sql indicates whether to enable conversion to SQL statement.3. Observe the consumption.

피드백