Release Notes

High Availability

Cluster-Level High Availability (Cross-AZ Deployment)

TDMQ for MQTT provides cluster-level high availability capability. Cross-availability zone (AZ) deployment effectively improves service stability and disaster recovery capability. When purchasing an MQTT instance, you only need to select the region where the MQTT cluster is located. The server will deploy across multiple AZs by default when creating the cluster.

Deployment Architecture

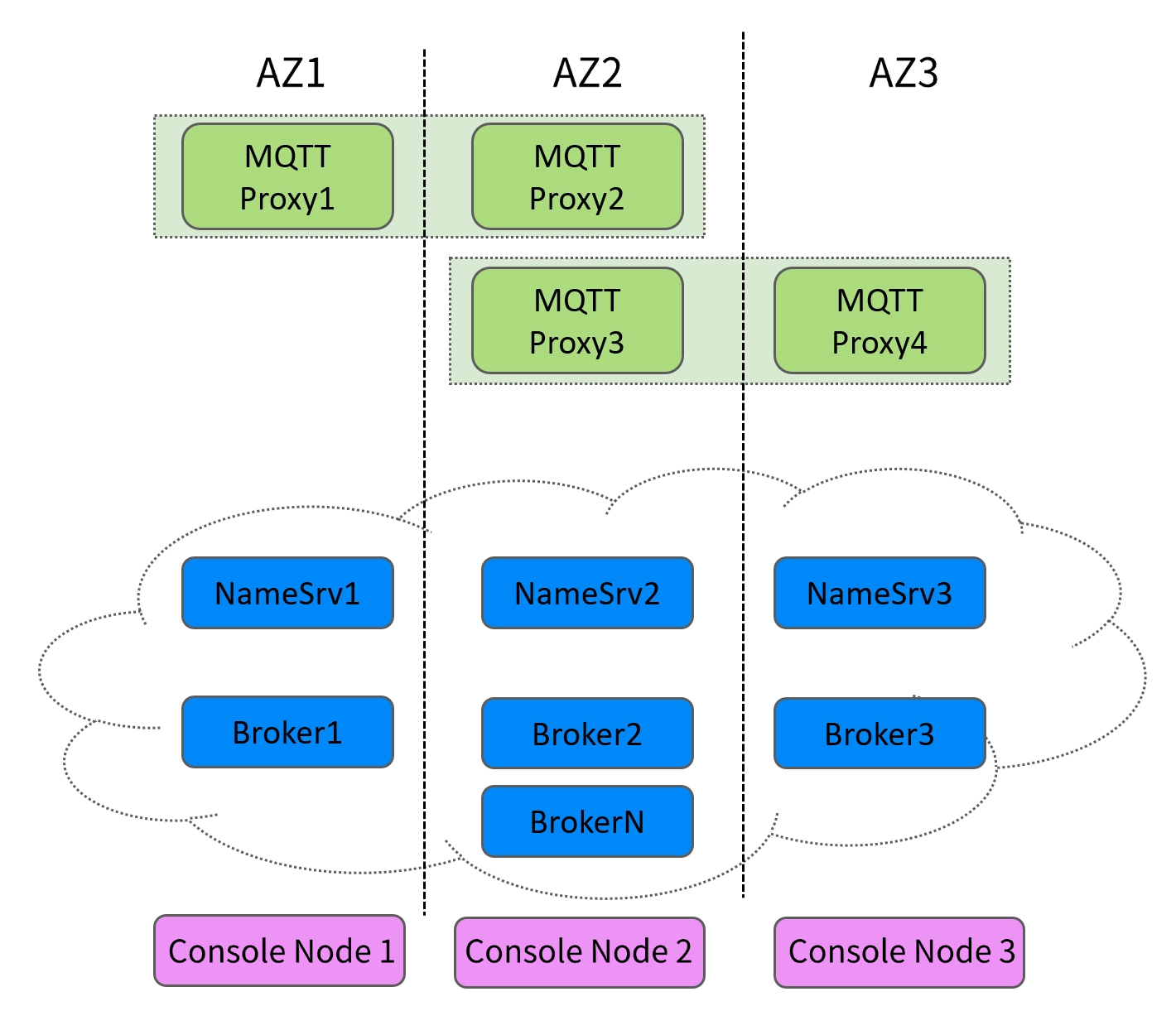

The cross-AZ deployment architecture of MQTT is divided into the network layer, data layer, and control layer.

Network Layer

MQTT exposes multiple cluster access addresses to clients in the form of domain names. After connecting to a cluster access address, clients communicate with the MQTT data layer. The domain names can fail over to other AZs at any time. When an AZ is unavailable, the backend service switches to other available nodes in the same region, thereby achieving cross-AZ disaster recovery.

Data Layer

The MQTT data plane adopts a distributed deployment method, with multiple components deployed across AZs.

The Proxy component is stateless. When a certain AZ (such as AZ1) or its Proxy fails, the server automatically removes it from the backend server pool and attempts to establish a new Proxy node. All new client connections and requests are then seamlessly forwarded to Proxy nodes in other available AZs (such as AZ2 and AZ3).

Clients always communicate with a uniform domain name (the cluster access address mentioned above) and are unaware of backend Proxy switchovers, requiring no configuration changes or restarts. They may only observe a very brief (second-level) network jitter or timeout, after which connections are automatically restored. After AZ1 is restored, server-side health checks detect that its Proxy instances are healthy again and gradually add them back to the server pool to resume receiving traffic.

Brokers are responsible for message storage. Since clients cannot communicate with Broker nodes, they cannot directly sense the health status of the Brokers. When a certain AZ (such as AZ1) or its Brokers fail, the routing information in the NameServer cluster is updated to indicate that the queues for all topics are now being served by new Broker nodes (for example, those in AZ2). All Proxy nodes then pull the latest routing information from the NameServer cluster and route new client requests to Broker nodes in AZ2.

Control Layer

Likewise, the MQTT console supports cross-AZ deployment. The console nodes do not handle data traffic, with one set of management and control services deployed per region.

Data-Level High Availability (Message Persistence)

One of the advantages of TDMQ for MQTT is persistent storage of messages.

When a client receives PUBACK (QoS = 0 or 1) or PUBCOMP (QoS = 2) from the server, it means the message has been sent successfully and the server has received it. Upon receiving the message, the server persistently stores it on the cloud disks of the Broker nodes, which support cross-AZ disaster recovery. Additionally, cloud disks offer backup points and replica storage, further ensuring message persistence and high data availability. Even if the Broker nodes across all regions fail, stored messages remain intact.

After messages are persistently stored, you can view their details and trace information using the Query Ordinary Messages feature. By default, messages are retained on the server for three days. If this does not meet your requirements, you can purchase a Platinum Edition cluster and contact us by submitting an after-sales ticket to adjust the retention period.

Help and Support

Was this page helpful?

You can also Contact sales or Submit a Ticket for help.

Help us improve! Rate your documentation experience in 5 mins.

Feedback